xvYCC and Deep Color

If you scoured all of the details on the recent HDMI 1.3 release (and who didn't?), you may have noticed the inclusion of xvYCC and Deep Color. These are two different things that together will theoretically make displays' color more realistic. The short version is this: Deep Color increases the available bit depth for each color component, while xvYCC expands the overall color gamut. Sure they do, but why?

Isn't Color Color?

Essentially, all TVs use the primary colors of red, green, and blue to create all of the colors in a video signal. "Now wait a second," you may say (or not, but let's assume you do). "I learned in second grade from Ms. (not Mrs.) Driscoll that the primary colors are yellow, red, and blue." For finger paints and any ink or dye, that is true. This is called subtractive color. (See Figure 1.) The ink, like what you see printed on this page, absorbs all the whitish light from your bathroom fluorescents and reflects back only the color you see (more or less). With additive color (Figure 2), a specific wavelength or wavelengths are being created. The easiest way to think about this is that, with additive color, light is being created directly; with subtractive color, light is reflected. In video, we deal with additive color, hence the RGB.

Thanks to the way the eye works, red, green, and blue are the colors that TVs add together to create other colors. So, red and blue mix together for magenta, red and green for yellow, blue and green for cyan. By varying the amount and intensity of each color, a display can create different shades. The more steps of gray (and, by extension, steps of each color) a TV can create, the more colors it has available on its palette to create a lifelike image. This palette is not infinite, as the color space in the video signal constrains it somewhat.

Figure 1: Subtractive Color Mixing

Figure 2: Additive Color Mixing

Color Space?

What is red? What looks red to me may look reddish-orange or purplish-red to you. Color is in your head. So, there needs to be a standard. Otherwise, every TV show would look different, and, in reality, our very TV system wouldn't work.

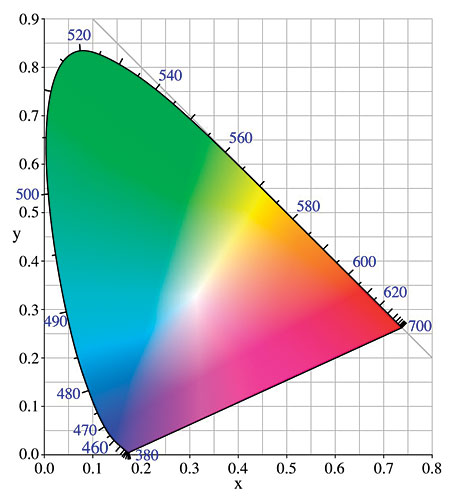

1990 saw the approval of Rec.709 (a.k.a. Recommendation ITU-R BT.709), which defined the exact red, green, and blue points for the upcoming HDTV standard. These points are "x" and "y" coordinates as found on a CIE (Commission Internationale de l'clairage or International Commission on Illumination) chromaticity diagram like the one in Figure 3. Without diverging too much from the topic at hand, the chromaticity diagram is a visual and numerical representation of color. Use it, and you'll never have to say, "Well, this one is kind of bluish-greenish with a little reddy-purple." With this specification in place, content creators for TV and movies can know that their cameras will output a certain color, they can edit and color-correct to specific colors, and then hopefully they can see it on a monitor as they saw it in the camera (or not, depending on what they were hoping for). Without this specification, each TV show, and even different cameras or editing equipment, would all assume a different specific red, green, and blue so that what you got at home could look all out of whack. In reality, it doesn't work perfectly, but this is the idea. If every step in the chain follows the standard correctly, the result in your home is colors that look the way they were supposed to, either compared with the real world (the field in football, for example) or somewhat off in a way that the director intended (like the greenish tint on CSI or the yellow-orange of CSI: Miami).

So, a TV with perfect color points (like the Samsung HL-S5679W I reviewed last month) can accurately reproduce exactly what the provider intended and every color within the triangle of its red, green, and blue color points.

But What if There's a Color Outside of the Triangle?

Well, that would be the issue here. There are plenty of colors that lay outside of the Rec.709 triangle that our eye can see but that TVs can't reproduce. One industry pro commented to me that he had never seen an eggplant look right, for example. TV manufacturers, or, more precisely, their marketing departments, would have you believe that they have it all solved. Their TVs sport an "Ultrawide Color Gamut" or some similar line. If you only expand the color gamut on one end, the colors will look cartoonish. This is because, when the video signal tells the TV to create red, it creates its red, which may be some sort of über red and not at all realistic. This may make for wow factor on the show floor, but it doesn't make the image look at all realistic. Color is an important factor in choosing a display. (See the Face Off on page 62 for more.)

So, What's This xvYCC and Deep Color?

xvYCC (also known as IEC 61966-2-4) expands the color-gamut triangle but does so as a standard across the board. This gives access to deeper colors—a redder red, if you will—for content providers and all the way down to you at home. Interestingly, xvYCC doesn't do this by changing the Rec.709 primaries. Instead, it uses those primaries as reference points for a whole lot of other math. Simply, it allows for more room around the current RGB triangle.

Deep Color increases the number of bits available for transmission for each channel. This means that there are more shades available for a TV to mix together. So, for example, a TV that accepts the new standard in 12-bit form can mix together any one of 4,096 shades (levels of brightness) of each primary color for 68.7 billion possible colors (4,096 red x 4,096 green x 4,096 blue = 68,719,476,736 colors). HDMI 1.2 could only transfer 8 bits per channel. So, there were only 256 shades of each color to choose from and fewer colors overall (256 x 256 x 256 = 16.7 million). These different shades help decrease artifacts (like color banding) and increase color fidelity. The visible picture-quality increase from 8 bits per channel to 10 or even 16 (in its highest 1.3 form) has been and is still being debated, but having the ability to transmit xvYCC and Deep Color sure can't hurt. Together, they mean that there will be more and better colors for future displays.

Figure 3: A 1931 CIE Chromaticity Diagram

But There's a Catch

In order to make for a wider color gamut and a higher bit depth for even more realistic-looking displays (capable of creating a wider range of colors), every step in the chain needs to do that exact thing, as well. If the camera can only do Rec.709, it won't matter that your TV can do more than that, because that extra color isn't in the source (which is, uh, the situation we have now). If the camera can do xvYCC but the medium (say, HDTV broadcasts) can't, again, it won't matter that your TV can do it. In other words, for you to see the new colors, material will have to be shot, transferred, encoded, and mastered in xvYCC and Deep Color. Sure, you could fake the wider color gamut at the mastering stage, but this won't be true extra color.

Most importantly, the source itself (say, some future HD DVD or Blu-ray player) will have to be able to output the extra color (via HDMI 1.3 or greater) to get to your TV, which also has to be xvYCC and Deep Color capable. If any step doesn't have these, then you won't get the benefit.

With the fact that some TVs don't have enough bits to do the current standard correctly, while some have widely inaccurate color points, even this end of the chain isn't a given. It's a much bigger issue than just having the capability on the cable, isn't it?

A Step (All Is Not Lost)

The PlayStation 3 and PC create their own universe, so to speak, so they can do Deep Color now (if so enabled). Apparently, some camcorders will be coming to the market soon that can do xvYCC. So steps are being made to get content. As you can imagine, film itself isn't bound by these standards. Only the mastering is. So, creating the content isn't a huge obstacle. The issue is getting that content to the consumer.

Should I Throw Out My TV?

Just because it's in the HDMI spec doesn't mean you'll be seeing it fully implemented any time soon. Sony showed a prototype LCD panel at CEATEC in October that was xvYCC compliant. As you've read, such a TV is only one part of the equation and, in reality, is the easiest part (when and if it ever ships). We'll need source material—and a source to output it—that can also do xvYCC and Deep Color (other than just a PC, PS3, or camcorder). Apparently, now that there is a way to transfer it, studios and manufacturers are both getting more interested in xvYCC and Deep Color. So, in other words, these are great ideas with lots of promise that we may see, but not any time soon.