I despise HDMI. If it were designed more for passing a signal instead of copyright protection there would be no problems. Most AV gear can be connected with component video and analog audio so why do we need this extra layer?

UHD Blu-ray vs. HDMI: Let the Battle Begin

Excited as a youngling on Christmas morning, I cracked open the box at once, thinking I would try the player's various built-in streaming options to look at some 4K content from YouTube, Netlix, and Amazon Prime. (While Vudu is also on the player, it hasn’t been enabled for 4K content, yet). In the past year I have upgraded my pre/pro to a Marantz AV8802A, and my projector to a JVC X750R, to make my system HDCP 2.2 and HDMI 2.0a compliant in anticipation of 4K content. Furthermore, I installed an Atlona (AT-UHD EX 70-2PS) HD-BaseT extender as well as an 18gigabit-per-second (Gbps) high-speed rated Monoprice Cabernet active signal-boosting HDMI cable as a backup. These weren’t inexpensive upgrades at all—except for the Monoprice cable, perhaps—but I’m willing to sacrifice a lot to stay on the cutting edge of technology.

Needless to say, I was very disappointed when I hooked up the Samsung to my Marantz pre/pro's DVD input via a 2 meter (about 7 feet) Audioquest Carbon HDMI cable and got...a blank screen. What the…? Frustrated, I switched the pre/pro's HDMI output link to the projector from the Atlona HT-BaseT extender to the backup Monoprice cable and...nothing changed—blank screen.

I’m not one to give up so easily, so I pulled the Samsung out of my rack and hooked it directly to the JVC projector via the 2 meter Audioquest Carbon and...presto, we have a picture! Hmm. Upon initial setup, the player needed a firmware update, so I ran an Ethernet cable from my rack to the player (now sitting under the projector) and hoped the firmware update would fix the connection problem through my pre/pro. Sadly, it didn’t.

Early Adopter Blues

Venturing over to AVS Forum I discovered I wasn’t alone. We early adopters were in the same boat. Based on the other posts, it seemed like if you had more than a 5 meter HDMI cable running to your display you probably weren’t going to get a picture. Not promising for those of us who use projectors that are often a good distance from the rack. But why was this happening?

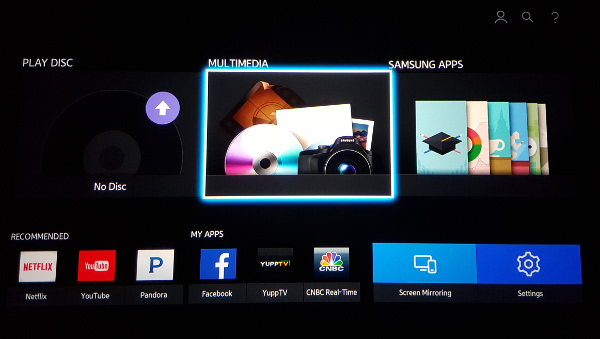

A little investigation (including looking at the player's output with a friend's HDMI signal analyzer) revealed that the Samsung player's home splash screen (above) gets output at a resolution of 3840 x 2160P at 60Hz with a YCbCr 4:4:4 8-bit signal type. This is the maximum resolution provided for in the HDMI spec and it requires a bandwidth of just under 18 Gbps! Seriously? To add insult to injury, the Atlona product I had installed specifically states that it cannot handle 2160P/60Hz 4:4:4 at that refresh rate, only at the less bandwidth intensive chroma compression 4:2:0 (which signifies a 50 percent drop in data). Isn’t HDMI a wonderful thing?

I found a temporary workaround. I discovered I could put a Blu-ray or UHD Blu-ray disc in the player, start the disc, get a picture, then exit the disc and finally see the player's home splash screen menu. By “tricking” the player this way, the splash screen will lock into the last resolution used, which in the case of Blu-ray is 1080p/24Hz 8-bit, or with UHD Blu-ray, 2160P/24Hz 10-bit, which uses far less bandwidth. Unfortunately, though, as soon as I powered-off the player it reverted to the higher bandwidth signal when I powered it back on. Talk about jumping through hoops!

All is Not Lost

I had been planning on doing an article on HDMI cables specifically related to 4K for some time and had been contacting suppliers in late December for samples, so my procurement of sample cables had already began.

One supplier was MyCableMart.com, whose president wrote back to me that their current active 4K HDMI cable at 30ft was not certified to meet 4K@60Hz 4:4:4 and that it would only meet 4K@30Hz. It turns out that the current Redmere chip used for the signal-boosting won’t support that resolution—which explains why I ran into issues with the Monoprice that uses the same PRA1700 chipset. Regardless, he did inform me that he had new cables coming out in late January with a new Redmere chipset (PRA1700 Rev. A) that should be up to the challenge. That's what I ended up using for my test. Audioquest was also willing to send me samples of their different cables, but they were upfront in stating that, at cable lengths past 5 meters (about 16 feet), the chances of successfully passing such a high bandwidth signal diminish with each meter beyond that length.

- Log in or register to post comments

Another thing that I hate is that you have no idea what you are buying & installing a product that the package/item does not tell us if we are getting a 1.4 or a 2.0 item. No markings on the product or packaging. This should be against the law of the electronics standards. The cable should have markings on the cable of HDMI & which series it is, & at least what it can do & a manufacturing date. What is happening is the manufacturers can dump old products on installers & buyers that can not know the what series they are installing. Should be against the law.

Maybe I misinterpreted the information I saw, but, during the CES show, in viewing several videos on Youtube interviewing a number of "experts" from the various companies, I understood that the new UHD players would be able to automatically adjust their output depending on the connections and monitor's video capability. It would seem based on this experience, reverting back to the one maximum video output resolution without an option, that does not seem to be the case.

Perhaps just another reason never to buy the first generation product.

Phew, I guess the good news is that some high end cables work -- and that some vendors are admirably frank about the limits of their cables.

I wonder whether something like BlueJeans Cable is able to handle it at a lower price point.

Ever heard of DPL Labs? They offer the most stringent third party certification for 10.2 and 18 Gbps HDMI cables. (I have no financial affiliation with them.) Currently the ONLY cable for distance runs of 18 Gbps is Tributaries' Aurora OM3-based optical solution. HDBaseT chipsets are solely based on a 10.2 Gbps architecture. Due to the costs involved with developing a next generation version, we are unlikely to see an 18 Gbps HDBaseT chip in a VERY long time, if ever. The dirt cheap, buy them by the container with no quality control and don't bother to certify them HDMI cable companies like Monoprice will NEVER be the answer to such high demands on quality and data throughput. If you want real UHD/4K, you have to step up. This is not an "everybody gets one" situation. Don't you want to feel special? Don't you want to be the only kid on the block with that cool new toy? Buck up and pay for it.

This is useful information but meanwhile there's 4K discs to be bought and very little mentioned about them. They don't even have a demo set up yet at my local Best Buy. Definitely needs more time in the oven.

In over 55 years of A/V exposure nothing is worse than HDMI. If I had a penny for every time the hdmi cable became disconnected, froze up, or the inevitable 'handshaking' gremlin reared its ugly head I'd be a millionaire. What a piece of crap technology.

I totally agree that HDMI is useless. I know it's too late but it would have been nice if HDMI was researched/studied a little bit closer because I believe that it would not have been given the green light for public consumption. What a big mistake by the A/V industry!!!!!

Since your projector is not true 4K, I wonder if a native 4k projector from Sony or a 4k TV would help.

Since I assume you don't have a 4K Sony projector laying around, did you test any of this when hooked up to a 4k TV?

My Sony 4K VW1100 projector works well with an Audioquest Cinnamon 10-meter and this player, but I added another 2 meter hi-speed to it for a better rack position and it stops working.

The Sony 4K player has been working with the same installation for almost 4 years, although is 8-bit 4:2:0, and the 2-meter extension does not affect it, but if I put an HDMI switcher to manage the 4K sources, even the Sony player does not work.

However. There is no need for all consumer content that is tipically stored or streamed as 4:2:0 to be up scaled for purposes of HDMI local delivery to the display device.

All consumer 4K and non 4K content has been (and on the near future will be) the same way: 4:2:0.

Upscaling to a color sub sampling of 4:4:4 or even 4:2:2 is a waste when the display has been doing and will do that for years, in doing so the player is misusing HDMI bandwidth that is needed for the 10-bit 4K Blu-ray.

Thanks for the article David, and for the experiment, the picture quality of the 4K Blu-ray Disc is excellent, streaming 4K is a mix bag depending of camera, source, ability of the cameraman, and of course the butchering compression applied by most services, which affects also the 4K content I download from Sony's Entertainment Service.

Best Regards,

Rodolfo La Maestra

The one advantage of HDMI that was noted in the article is the lack of clutter, which also means ease of hookup for people who aren't in the least technical. A few years ago I was in Australia, and would be for the next 8 weeks, and my wife e-mailed me from home in the US saying that our AVR had died, and she couldn't watch TV anymore. Because everything was HDMI I was able to talk my daughter through the changes to cabling, which I would never have been able to do with DVI/RCA/optical.

Still, HDMI could be much better.

I'm a little confused with this article I have had no issues with my HDMI cables. I run this cable with zero issues. Strait from the player to my TV. Which I believe this is one of the cables he had listed as a fail. Is the real issue the projector or something else causing his issue. http://www.amazon.com/Monoprice-Cabernet-Certified-Supports-Ethernet/dp/...

Maybe it is because the JVC projector is not a true 4K projector? It is using shift not true 4K chips. I'm using a Samsung 85" 4K TV (UN85HU8550) + Samsung Evolution Kit (SEK-3500U/ZA) which gives me 4K plus HDR10. Just a thought. Anyway good luck I just think this could be miss-information saying the cable is at fault.

I posted it in my first post. It is a Monoprice Cabernet Ultra Certified High Speed Active HDMI Cable 30ft Supports Ethernet 3D Audio Return and CL2 Rated. However, I also had no issues running it over my older Monoprice non-Cabernet 30ft 10GB (my guess since I bought it in 2011). Your the first person I have seen blame their 4k issues on cables. Normally it is a non-HDCP 2.2 somewhere in the path. I'm active on several Facebook home theater sites and home theater forums and haven't seen any complaints of these issues. Since I'm normally the one with the issues my just over a year old receiver didn't support HDCP 2.2 so I had to trade it in for one that did. I have now switched all my cables over to 18GB rated ones to be safe but for years all "you" experts have been telling us that the cables and ratings don't mater... Now all the sudden they do? Heck I know several with your projector and the Samsung player running the Amazon Basic cables with no issues. Good luck with your articles but it is articles like this based on little real world data that are scaring people away from 4K. If you want me to take your article seriously interview a few 1000 people and get real data.

Since new technologies become as quickly obsolescent as new cars I have no intention of buying any 4K UHD BD player since that would me having to buy a new tv and receiver, and I'm just not ready to shell out that kind of money or other resources to do so. By the time my two year old Oppo, Piponeer Elite receiver, and panny plasma bite the dust I'll consider new components then. By that time perhaps we will be rid of hdmi. I know I'm dreaming but.... Regarding analog connections a friend of mine just did that and got rid of all hdmi connections. He also has a 12K HT system. His one reason for doing so was not because of the poor hdmi connectors, he just could not stand the whole hdmi 'handshaking' crap. I am sorely tempted. I was watching the Masters yesterday and every time I switched channels I had to wait upwards of 6-8 seconds.

shouldn't 18 Gbps HDMI be able to handle 3840 x 2160p x 60fps x 10 bit 4:4:4 Y:Cb:Cr source?

Because 3840 x 2160 x 60 x 10 x 3 = 14,929,920,000 bits or roughly 15 gbps and HDMI 2 can support transfer up to 18 gbps.

the 10 x 3 means 10 bit per channel time 3 channels of Y:Cb:Cr btw.

so how come when an HDMI detect a 4K source @ 60fps at full 4:4:4 Y:Cb:Cr sampling, its limit it to 8 bit per channel?

Have you considered and EDID manager?

Does your Pre-pro have built in EDID management?

There are EDID managers that you can dial in to whatever resolution you want to have or want to fake. They are very handy and can improve setup time between cycles. I've used very simple and inexpensive ones that look like a female to female connector as well as larger little black boxes that are powered, they've all worked fine. Sorry I don't have any brand recommendations.

Good luck!

Cheers,

Hi,

Understanding EDID wasn't as necessary before devices tried to do too much for you, what a pain.

I'm not sure if you're interested or even need the info but, here are some resources on understanding EDID

http://www.analogway.com/en/products/software-and-tools/aw-edid-editor/#...

Click on the Download tab, there's a White Paper. And actually even through you might not want or need to program your own EDID running and understanding the EDID editor gave me a better understanding of EDID. The EDID editor is not product specific and can be used to edit and create EDID for any device.

Cheers,

David,

Rather than fighting a battle with Samsung or waiting your luck for the next 4K Blu-ray player, crossing the fingers that 2160/60p is output as 4:2:0 and not irrationally upscaled to 4:4:4 as you said the Samsung does, you may want to consider installing HDFury Integral, which will bring the 4:4:4 2160/60p high bandwidth output of the Samsung player to its original 4:2:0 state so all your equipment, HDMI chipsets, and cables investment can be reused.

This unit is a 2x2 HDMI 2.0a matrix switcher/splitter that also converts HDCP 2.2 to legacy HDCP to allow existing non-HDCP 2.2 AV Receivers to be on the 4K HDCP 2.2 signal path, which greatly simplifies the connectivity hurdles 4K brings to most legacy HT systems.

I just ordered a unit to review it on my magazine (and test it with my two 4K players).

https://www.hdfury.com/shop/splitter...60-444-600mhz/

Good luck David,

Rodolfo La Maestra

I understand this is an old thread, but here's my comments anyways...

My TV is a regular HDTV (1080P, Sharp Aquos Quattron). I've never had any issues with HDMI at all for the 6 years I’ve had my system set up. I have a Yamaha AVReceiver and all is connected via HDMI, of course, except the loudspeakers that use regular wires. I am now beginning to start the process of upgrading my living room AV setup. I bought a Jamo S810 subwoofer (delivered and installed already), and their set of speakers (5.0) that are supposed to arrive next week. The Yamaha AVR is quite old (10 years), and it already has one channel burned (I rewired and I use the B zone ports to bypass the burned channel in zone A). But, not only the AVR is old and with a burned channel but also it does not support all the good stuff that nowadays we have (eARC, Dolby Atmos, 4K, HDR, etc.) So, I am prepared to shell out another 600 dollars to upgrade the AVR. Now here's how my post is relevant to this thread: I am concerned that the HDMI cables I have, passed through (inside) the wall are not going to be able to support all these new technologies. The setup that I have is super neat! There are NO wires whatsoever dangling from my TV. The power outlet is behind the TV, and all cables go into a port also behind the TV, and come out lower in the same wall, connecting to the AVR. So, all cables are completely hidden behind the wall, the TV, and the cabinet where the AVR is. I am DREADING the day I find out that the HDMI cables are no longer good for all that is to come. I'm not one of those "handy" guys that can fumble around with cables inside walls and get things working. But, since I'll probably be out of other options, I will try and fish the cables by using the existing cables. I should be able to tie them tight enough so I don't lose a cable mid-way through the wall... :-/ Hopefully.. Wish me luck. Maybe I luck out and the old HDMI cables I have will be able to handle it.. P.S. Yes, the TV will be replaced with a 4K HDR soon as well, hence the potential need for new HDMI/higher rated HDMI cables..

Not promising for those of us who use projectors that are often a good distance from the rack. | 360 photo booth Phoenix AZ