Scaling: Size Matters

In the February 2005 issue of Home Theater, this column concentrated on deinterlacing, which is essential for all fixed-pixel displays. But that's not the whole story of video processing. Equally important is scaling, which is the process of changing the incoming video signal's resolution and aspect ratio to fit the display. Without scaling, the image you see on the screen might not use all of the available pixels, or some of it might be cropped out, leaving you with only part of the picture.

Scaling is particularly important because there are so many different types of video signals available today, from 4:3 480-line NTSC, to anamorphic DVDs (which can have a variety of aspect ratios), to 16:9 720- and 1,080-line HDTV. Not only that, there are many different types of video displays with different aspect ratios and resolutions. Ultimately, the goal of scaling is to render all types of video signals on a given display with maximum image quality and minimum artifacts.

In a sense, there are two types of scalers: external and internal. External scalers are separate components that accept an input signal, scale it, and send the resulting signal to the display. Typically, they can be configured to output a variety of resolutions in order to accommodate different displays.

Internal scalers are built into displays, so they have only one output resolution—the display's native resolution. This allows manufacturers to optimize the scaler for each particular display. Internal scalers are also found in some source devices, such as upconverting DVD players, which scale the 480-line DVD signal to 720p or 1080i from the player's DVI or HDMI output.

A video scaler may be called upon to increase a low-resolution image to a higher resolution, reduce a high-rez image to a lower resolution, convert a 4:3 image to 16:9, and/or correct keystone distortion resulting from off-axis projection. In each case, the processing is similar, although these functions represent increasingly sophisticated scaler operations.

Before it can perform any actual scaling, the processor must digitize and deinterlace the video signal into complete frames. It stores each frame in a memory location called a frame buffer, where the scaler analyzes each pixel in the frame.

Digital FIR (Finite Impulse Response) filters perform this analysis. They are nothing more than mathematical algorithms that manipulate numbers. FIR filters include what are called taps, which, in a scaler, correspond to the Y/Cb/Cr digital component video values (that is, the intensity values of Y, Cb, and Cr, the digital versions of the more familiar Y, Pb, and Pr) of individual pixels.

As a scaler analyzes each input pixel, it takes into account some of the pixels surrounding it. The number of neighboring pixels the scaler uses in the analysis depends on the number of taps in the filters: The more taps, the more neighboring pixels the scaler considers, and the higher the quality of the scaled image. Why? Scaling normally adds pixels to (or removes them from) the input image, a process that is technically called interpolation. The goal of interpolation is to add or remove pixels so that the final image looks as if it had been that way all along. The more the scaler knows about the pixels in the neighborhood of each input pixel, the better it can interpolate what the output pixels should be.

The scaler measures the Y/Cb/Cr values for each input pixel and the surrounding pixels. It then multiplies those values by weighting factors assigned to each tap. These weighting factors define each input pixel's importance in determining the final output pixel. In general, the closer a tap is to the location of the pixel being analyzed, the higher its weighting factor, as closer pixels have more impact on the final output pixel than those that are farther away. The scaler then adds the results together to calculate the Y/Cb/Cr values for the final output pixels it sends to the display. The distribution of weighting factors among the taps is not simple or straightforward; this is the art of digital-filter design.

In many scalers, filters analyze the surrounding pixels in the horizontal and vertical directions sequentially. These are called 1D filters and are relatively simple and inexpensive, and they work well enough for rectangular resizing. However, 2D filters that analyze pixels in both directions simultaneously produce a much better result when performing horizontal and vertical keystone correction.

Most scalers use a fixed number of taps for the horizontal and vertical filters; typically, there are three to seven taps in the horizontal direction and three to five in the vertical. By contrast (gratuitous plug here), the Silicon Optix Realta HQV processor uses a single-pass 2D filter that can vary in size and aspect ratio for each pixel, up to a maximum of 32 by 32, yielding a total of 1,024 taps.

If the resolutions of the input and output images are different (which is the whole point of scaling), how can analyzing each pixel of the input signal create the proper output resolution? The answer is that the scaler doesn't perform this analysis precisely at the location of each pixel in the input image; instead, it performs it at the location of each pixel in the output image.

For example, let's say the input is a 4:3 720-by-480 DVD image, and we want to display it on a 16:9 1,920-by-1,080 fixed-pixel device, which has six times as many pixels as the input source. A scaler must perform its analysis at six different locations around each pixel of the input image, and these locations are separated by a fraction of a pixel width, which is also known as a subpixel. To accomplish this, the scaler slightly alters the weighting factors for the same set of taps in those six analyses. This has the effect of skewing them to one side or another of the input pixel's actual location. The skewed sets of weighting factors are technically referred to as different phases of the FIR filter, and the more phases a filter has, the more accurate the result will be.

To see how this works, imagine a three-by-three grid of pixels that shifts up, down, right, and left by half a pixel width. This would require a nine-tap filter with four phases, and it would produce four output pixels located around the position of a single input pixel. In fact, the positions of the output pixels would overlap the position of the input pixel. The scaler would calculate the Y/Cb/Cr values of each output pixel based on the Y/Cb/Cr values of the nine input pixels in the grid, along with skewed weighting factors, which compensate for the four new pixel locations.

If the DVD in the previous example was not recorded anamorphically, the final image will look horizontally stretched, since the scaler is placing the 4:3 image into an aspect ratio of 16:9. But the scaler should also be able to maintain the aspect ratio; in this case, if the DVD image is 4:3, the output resolution would be 1,440 by 1,080. Another alternative is to use weighting factors that result in a "smart stretch," in which the sides of a 4:3 image are stretched more than the central region, a feature found in virtually all modern 16:9 displays.

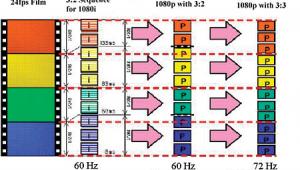

Many people mistakenly use the word "scaler" to mean the entire video processor, including deinterlacing and 3:2-pulldown detection. But the word is correctly applied only to the part of the processor that sizes the image and changes its resolution to match the display's. In a future issue, we'll discuss what to look for in a video processor's performance.

*Jed Deame is the co-founder and general manager of Teranex, a division of Silicon Optix. He developed the concept for the Teranex Video Computer platform and helped bring the technology to the single-chip Silicon Optix Realta HQV video processor.

- Log in or register to post comments